I sometimes wonder: what IQ does a pocket calculator from 1990 have?

Is it zero? Undefined? Does the question even make sense?

And yet here in 2025, we see headlines declaring that large language models (LLMs) have IQs. Not just any IQs, but human-level ones — sometimes even higher than average. People are saying things like “GPT-4 has an IQ of 120.”

To me, this is absurd.

IQ was invented as a test of human cognition relative to other humans. It measures certain problem-solving and reasoning skills in people. It was never meant to apply to machines. Giving a calculator or an LLM an IQ score is like giving your toaster an “empathy rating.” It’s a category error.

But let me be clear before anyone thinks I’m dismissing the technology itself: LLMs and related systems are utterly brilliant. They are one of the most significant breakthroughs of our time, arguably on par with the invention of the computer itself. Like calculators in their day, they are tools that expand the range of what humans can do. Unlike calculators, they can generate fluent text, create coherent arguments, and mimic human conversation in a way that feels almost magical. They are astonishing, world-changing, and deserve every bit of recognition as a technological leap.

They can reason. They can be creative. They can write code, generate music and art, and even propose solutions to some of the hardest scientific challenges, like protein folding. These are extraordinary abilities. They are capabilities that just a decade ago would have seemed impossible for machines.

But here’s the key point: they are not intelligent.

Why Intelligence Isn’t the Right Word

Intelligence, at least in the human sense, implies awareness, understanding, goals, and reasoning. Humans understand what they are doing. They form concepts. They connect abstract ideas. They experience the world subjectively.

An LLM doesn’t do any of that. It doesn’t know what it’s saying. It doesn’t understand meaning. It doesn’t have beliefs or desires. It doesn’t “reason” in the way humans do. It is, at its core, a statistical pattern engine — an extraordinarily powerful one, capable of generating text so human-like that it can trick us into thinking there’s a mind behind it.

But there isn’t.

Calling these systems “intelligent” confuses people into thinking they’re something like us. They’re not. They’re tools. Sophisticated, transformative tools — but tools nonetheless.

The Chess Engine Test

Consider a chess engine. Does it have an IQ? Of course not. It can beat the best human grandmasters, but that doesn’t make it “intelligent” in any general sense. It doesn’t understand chess the way a person does. It doesn’t experience triumph or defeat. It calculates moves.

Or take a self-driving car. Does it have an IQ? Does it understand traffic laws, or is it just an enormous collection of rules and statistical models optimized to keep it between the lines?

Or a diffusion model that generates photorealistic images. Does it have an IQ in “visual reasoning”? Or is it simply mapping pixels and patterns in ways we find astonishing?

If we go down this road, we could just as easily start handing out IQ scores to calculators, washing machines, or Roombas. The absurdity speaks for itself.

Why This Matters

Some people might argue this is just semantics. Who cares if we call these things “intelligent” or not?

But words matter. When we say LLMs are intelligent, or that they have an IQ, we risk misleading people into believing they are something like humans — that they think, that they understand, that they might someday “wake up.”

This leads to misplaced fears, misplaced hopes, and misplaced trust. If we treat these systems as intelligent, we risk either overestimating them (thinking they can do things they can’t) or underestimating them (ignoring the real and unique risks they do pose).

By being precise — by calling them brilliant tools rather than intelligent beings — we give them the recognition they deserve without layering on confusion.

A New Category Needed

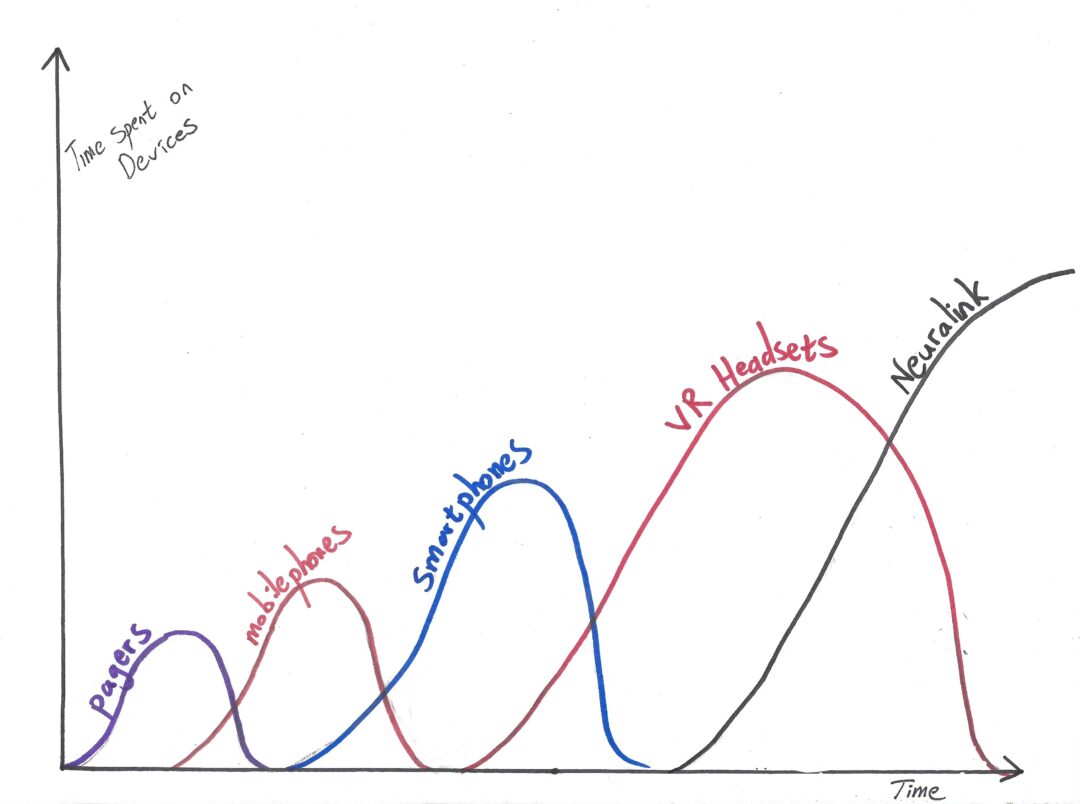

In the long run, people may look back at 2025 and laugh at the way we tried to fit machine performance into human-shaped categories.

We’ll seem naïve for thinking that “IQ” was a meaningful way to measure a language model. We’ll seem misguided for insisting on the label “artificial intelligence,” as though these tools were minds in the making rather than groundbreaking systems of computation.

The real challenge — and opportunity — is not to ask “What IQ does AI have?” but to invent new categories of ability. We need fresh language to describe what these systems actually are and what they can actually do. Because they are not people, and “intelligence,” at least as humans define it, might not be the right yardstick at all.

Brilliant Tools, Not Minds

So let me say it clearly:

- LLMs are revolutionary.

- They are a true technological breakthrough.

- They are changing the world in profound ways.

- They can reason, create, code, and even tackle scientific problems once thought untouchable.

But they are not intelligent.

Not in the human sense. Not in the sense of having an IQ. Not in any way that makes sense outside the marketing hype.

We should celebrate them for what they are: brilliant, transformative tools that extend human capability in ways we never thought possible.

But we should also be honest about what they are not.

Because someday, when the history of this era is written, people will see through the hype. They’ll recognize the brilliance of these systems — but they’ll also laugh at the idea that we ever tried to measure a machine’s “IQ.”

And maybe that’s for the best.

Discover more from Brin Wilson...

Subscribe to get the latest posts sent to your email.